Because CAPTCHA is such an elegant tool for training AI, any given test could only ever be temporary, something its inventors acknowledged at the outset. With all those researchers, scammers, and ordinary humans solving billions of puzzles just at the threshold of what AI can do, at some point the machines were going to pass us by. In 2014, Google pitted one of its machine learning algorithms against humans in solving the most distorted text CAPTCHAs: the computer got the test right 99.8 percent of the time, while the humans got a mere 33 percent.

Category: Science

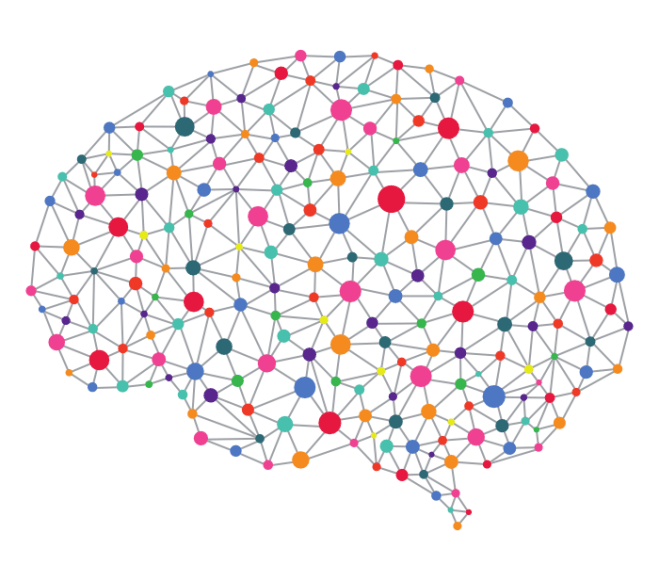

Is Consciousness Fractal?

This intersection between our experience and fractals may run even deeper than Taylor’s evolutionary hypothesis. “Any act of creativity is an act of physiology,” Goldberger says. “The extent that we are fractalized in our essence makes you think that maybe we would project that onto the world and see it back, recognize it as familiar. So when we look at and create art, and when we decide what to take as high art, are we in fact possibly looking back into ourselves? Is creation in part a re-creation?” “It wouldn’t come as a shock to me if consciousness is fractal,” Taylor says. “But I have no idea how that will manifest itself.”

To Become a Better Investor, Think Like Darwin

On financial innovations — read derivatives and securitizations — and the need for more collaboration :

People respond to incentives, and so if we want to take on much bigger challenges, we need to collaborate across thousands and in some cases hundreds of thousands of people. How do you get 100,000 people to work together? It’s not that easy. In the old days, it was religion and before that it was simple fiat rules, tyranny. The Egyptians built some beautiful pyramids, but they did that with hundreds of thousands of slaves over decades. If we rule out slavery as a possible means of societal advances, there really isn’t any other choice. If we need 100,000 people to cure cancer, to deal with Alzheimer’s, to figure out fusion energy and climate change…I don’t know of any other way to do that other than financial markets: equity, debt, proper financing and proper payout of returns. I think that in many cases [finance] probably is the gating factor. That, to me, is the short answer to the question about why finance is so important.

The Dark Secret at the Heart of AI

As the technology advances, we might soon cross some threshold beyond which using AI requires a leap of faith. Sure, we humans can’t always truly explain our thought processes either—but we find ways to intuitively trust and gauge people. Will that also be possible with machines that think and make decisions differently from the way a human would? We’ve never before built machines that operate in ways their creators don’t understand. How well can we expect to communicate—and get along with—intelligent machines that could be unpredictable and inscrutable?

Illustration : Adam Ferriss

Is Artificial Intelligence Permanently Inscrutable?

The result is that modern machine learning offers a choice among oracles: Would we like to know what will happen with high accuracy, or why something will happen, at the expense of accuracy? The “why” helps us strategize, adapt, and know when our model is about to break. The “what” helps us act appropriately in the immediate future.

It can be a difficult choice to make. But some researchers hope to eliminate the need to choose—to allow us to have our many-layered cake, and understand it, too. Surprisingly, some of the most promising avenues of research treat neural networks as experimental objects—after the fashion of the biological science that inspired them to begin with—rather than analytical, purely mathematical objects.